Introduction: From Digital AI to AI That Moves

NVIDIA’s annual developer conference GTC 2026 ran March 16–19, 2026. The theme: Physical AI.

Where ChatGPT-style AI operates in digital space, Physical AI acts in, touches, and manipulates the real world — factory robots, self-driving vehicles, home assistants. Every category of “AI that moves things” was addressed in a single wave of announcements.

- Jensen Huang declared: “The ChatGPT moment for robotics is here”

- Core platforms are now complete: GR00T N1.7 (general-purpose robot AI), Cosmos 3, Isaac Lab 3.0

- The world’s four largest industrial robot makers — FANUC, ABB, KUKA, YASKAWA — simultaneously adopted NVIDIA’s stack, signaling the shift from demo to production

🎤 “The ChatGPT Moment for Robotics Is Here”

Jensen Huang opened the keynote with a declaration:

“The ChatGPT moment for robotics is here.”

Just as ChatGPT brought generative AI to the mainstream in 2022, robot AI is hitting the same inflection point right now.

“Physical AI has arrived — every industrial company will become a robotics company.”

That might sound like hyperbole. After reviewing the actual announcements, it’s not.

NVIDIA’s most recent quarterly revenue hit a record $68 billion. In a WSJ interview, Huang said AI chip sales “will reach $1 trillion scale as the beginning of a new computing era.” Physical AI is the next growth engine underpinning that confidence.

🤖 Key Announcements

Isaac GR00T N1.7

A general-purpose foundation model for humanoid robots, now available under a commercial license via early access.

- Multimodal input: text, images, video

- Capable of grasping, transporting, and bimanual coordination tasks

- Adopted by LG Electronics, NEURA Robotics, and others

GR00T = Generalist Robot 00 Technology — a model designed to handle any physical task from a single foundation.

GR00T N2 is also previewed for release later in 2026, with claimed 2× improvement in success rate on novel tasks.

Note: the commercial license pricing model (per-inference token billing vs. per-device royalty) has not been publicly disclosed. Organizations considering industrial deployment should contact NVIDIA sales directly.

Unlike traditional robots specialized for one task, GR00T targets the ability to handle diverse physical tasks from a single model — the way ChatGPT answers diverse questions. It’s trained on a large-scale dataset combining real demonstrations, synthetic data, and internet video.

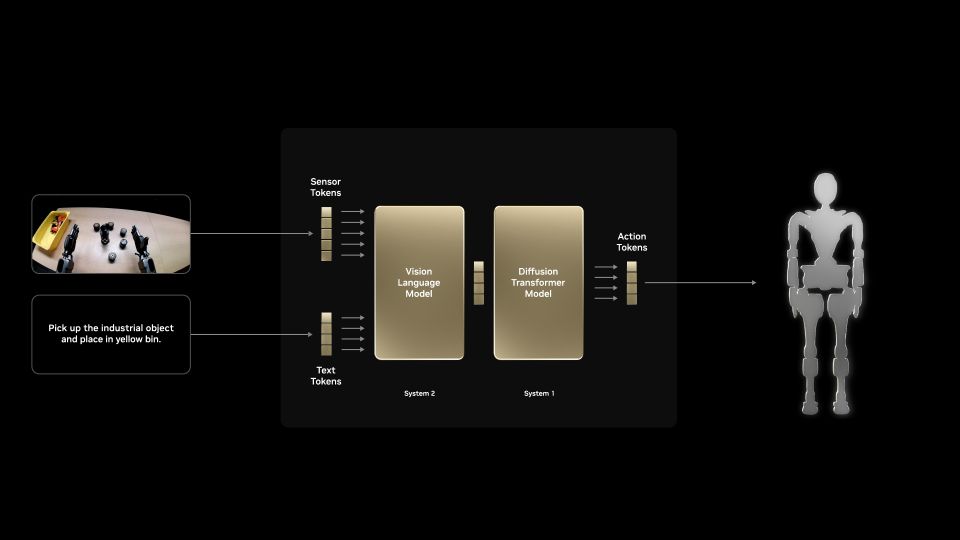

GR00T dual-system architecture: Camera + text input → Vision Language Model (System 2, ’thinking brain’) → Diffusion Transformer (System 1, ‘moving brain’) → Robot action. (Source: NVIDIA Developer Blog)

GR00T uses a two-system architecture. System 2 (VLM) runs at 10 Hz, slowly deciding “what to do next.” System 1 (Diffusion Transformer) runs at high frequency, computing “how to move the body.” The analogy: System 2 is the cerebral cortex; System 1 is the cerebellum and spinal cord.

Two Key Blueprints

What distinguishes GR00T is that the data generation pipeline is included, not just the model:

- GR00T-Mimic Blueprint: Auto-generates synthetic manipulation data. Minimal real-world demonstrations needed — training data can be produced at scale in simulation.

- GR00T-Dreams Blueprint: Trains for novel tasks across diverse environments, enabling adaptation to situations the robot has never encountered.

The most surprising aspect: post-training requires only 20–40 demonstrations. Show the robot a new task 20 times and it learns it.

Traditional robot programming required manually setting thousands of teach points for every movement. That is shifting toward “show it 20 times and it’s done” — a fundamental change for manufacturing floor changeovers.

Cosmos 3

A foundation model for robots to understand physical laws.

Before deploying a robot in the real world, Cosmos 3 enables simulation of “how will this cup move when I pick it up?” and “how does it fall over?” — training in virtual environments tens of thousands of times, dramatically reducing development cost and time.

Combined with NVIDIA Omniverse, digitally replicating an entire production line and testing new robot movements in a “virtual factory” before physical deployment is becoming standard practice.

Isaac Lab 3.0

Integrated robot training platform (early access).

Features the Newton physics engine with support for complex manipulation tasks like cable routing and parts assembly at scale. Isaac Lab connects GR00T, Cosmos, and Omniverse — the unified training infrastructure.

Jetson T4000 Module

Edge AI module with Blackwell architecture (announced at CES 2026):

| Spec | Value |

|---|---|

| Architecture | NVIDIA Blackwell |

| Performance | 4× previous gen (Jetson Orin) |

| Power consumption | Under 70W |

| Price | $1,999 (1,000-unit lot, industrial pricing) |

NVIDIA Jetson T4000 module (Blackwell architecture)

70W is a non-trivial number for autonomous mobile robots (AMRs). With typical AMR battery capacity of 1–2 kWh, AI processing alone consumes 1–2 hours of runtime. Power management design is unavoidable — “smarter AI, shorter battery life” will remain a real engineering constraint for the foreseeable future.

Blackwell is NVIDIA’s latest GPU architecture (introduced 2025). It spans data center B100/B200, desktop RTX 5000 / RTX Pro series, and now edge AI with Jetson T4000. The Blackwell generation’s AI inference performance improvement over the previous Hopper architecture is substantial across all product lines.

🏭 The World’s Largest Robot Makers Adopt — All at Once

The headline isn’t just announcements — actual deployments have started:

- FANUC, ABB, KUKA, YASKAWA (the world’s four largest industrial robot makers) have integrated NVIDIA Omniverse and Isaac into their virtual commissioning workflows

- Foxconn semiconductor manufacturing lines are running Skild AI robot intelligence with NVIDIA Blackwell

- Boston Dynamics, LG Electronics, NEURA Robotics publicly demonstrated new robot models with Jetson Thor

FANUC and YASKAWA are top-tier global suppliers for machining centers and welding robots — heavy industrial equipment with conservative, long-qualified product cycles. When these companies adopt NVIDIA’s AI stack, it signals Physical AI has moved past the demo stage. A startup saying “it can be done” carries different weight than FANUC and YASKAWA doing it in production.

🤝 Hugging Face Integration Accelerates Open-Source Development

NVIDIA and Hugging Face announced integration of Isaac and GR00T technology into the LeRobot open-source framework.

- NVIDIA robot developer community: 2 million

- Hugging Face global developer community: 13 million

These two communities merging could rapidly democratize robot AI development. The LeRobot repository already hosts GR00T N1.5 integration models (29 models, 171 datasets) — downloadable by anyone worldwide to deploy on their own hardware.

🔧 Hardware Engineer’s Perspective

“Humanoid robots don’t apply to my work” — that reaction is understandable. But stepping back, this trajectory connects directly to hobby electronics and IoT.

Jetson’s Evolution = Evolution of Deployable AI

Individual makers already use Jetson Orin Nano (~$499) for AI camera and voice recognition projects. The T4000 is industrial-priced, but NVIDIA has consistently followed each flagship module with a lower-cost developer kit 1–2 years later. A consumer-friendly Jetson T4000 variant is a matter of when, not if.

If the $2,000+ price is a barrier, Isaac Sim (free version) lets you experiment with the “brain” without the hardware cost.

The “20 Demonstrations” Implication

GR00T’s post-training capability (learn a new task from 20–40 demonstrations) is genuinely significant.

Traditional robot arm reprogramming for a new task required hours with a teach pendant, manually registering hundreds of points. Replacing that with “show it 20 times by hand” fundamentally changes manufacturing floor changeover costs. Flexible small-batch production becomes dramatically more feasible.

Safety and Security Problems Are Unsolved

As Physical AI spreads into factories and homes, safety standards and cybersecurity are unavoidable. Industrial robots must meet ISO 10218 and IEC 61508 functional safety requirements — but neural network outputs are probabilistic and unpredictable. “The AI does the right thing 99.9% of the time” may not satisfy factory safety certification requirements. How NVIDIA navigates the certification problem will directly determine deployment speed.

The Sim-to-Real Gap

The most technically interesting open question is the Sim-to-Real gap — the difference between simulation and reality. However precisely Cosmos 3 models physical laws, real factory floors have friction variance, part-to-part variability, dust, and vibration. Whether Cosmos and Isaac Lab can close this gap is the central technical challenge of the next few years. Complete closure isn’t realistic, but “80% in simulation, 20% fine-tuned on the factory floor” as a standard development model would already reduce costs dramatically.

🌍 Where This Goes

Factories Become “Reconfigurable”

Current factories are fixed assets once production lines are established. Robot changeovers require extensive reprogramming. If GR00T + Cosmos matures to the point where “the robot that welded car doors yesterday can be assembling different parts today” via a few on-floor demonstrations, manufacturing fundamentally changes. This capability is ideally matched to the trend toward small-batch, custom-order production.

Home Robots Become Unremarkable

When smartphones arrived, having a camera built into your phone felt unusual. Now a smartphone without a camera is inconceivable.

In 10–15 years, an “AI-free robot arm” may feel equally strange. GR00T’s descendants doing cooking assistance, elder care, and childcare support is no longer science fiction.

Open-Source Robots Chase Industrial Performance

In LLMs, open-source models (LLaMA, Mistral) are rapidly catching closed models like GPT-4. If the same happens in robotics — if the LeRobot community publishes a model that rivals industrial GR00T within a few years — high-end robot AI becomes accessible to researchers and individual developers on modest budgets. That’s an exciting trajectory from an electronics maker’s perspective.

GR00T N1.7: early access commercial license active. GR00T N2, Isaac Lab 3.0 official release, and Jetson T4000 general availability: watch for upcoming announcements. GR00T N1.5 integration models already downloadable on LeRobot (Hugging Face).

✅ Summary

| Announcement | Overview |

|---|---|

| GR00T N1.7 | General-purpose robot AI model for humanoids (commercial license launched) |

| GR00T N2 (previewed) | Planned 2026 release; 2× novel task adaptation rate |

| GR00T-Mimic / Dreams Blueprint | Synthetic data generation + diverse environment adaptation tooling |

| Cosmos 3 | Foundation model for robot understanding of physical world |

| Isaac Lab 3.0 | Large-scale robot training platform (early access) |

| Jetson T4000 | 4× previous gen; Blackwell-based edge AI module |

Just as ChatGPT marked the turning point for language AI, GTC 2026 may be remembered as the turning point for Physical AI.

The era of “AI lives in the cloud” is giving way to “AI moves things.” Robots becoming part of everyday life is no longer a distant prospect.